|

We propose a novel data augmentation method, $\textbf$ is most effective and identify the scope of its application. However, contrastive learning is hardly applicable to the tabular domain as it requires data augmentation by applying a set of predefined transformations, while. In this study, we focus on data augmentation to address these issues. Self-supervised learning and tabular data. However, such structure may not exist in tabular datasets commonly used in fields. In this article, I am telling a story of using TabNet as a solution for self-supervised learning on tabular data. The success is mainly enabled by taking advantage of spatial, temporal, or semantic structure in the data through augmentation. Tabular self-supervised learning (tabular-SSL) - unlike structured domains like images, audio, text - is more challenging, since each tabular dataset can have. Therefore, previous works have compared the performance without comprehensively considering these components, and it is not clear how each component affects the actual performance. Self-supervised learning has been shown to be very effective in learning useful representations, and yet much of the success is achieved in data types such as images, audio, and text. In addition, three main components are proposed together in existing methods: model structure, self-supervised learning methods, and data augmentation. However, data augmentation for tabular data has been difficult due to the unique structure and high complexity of tabular data. Deep learning techniques such as autoencoders have. In this paper, we fill this gap by proposing novel self- and semi-supervised learning frameworks for tabular data, which we refer to collectively as VIME (Value. Because your data is telling your model what the outputs should be given a.

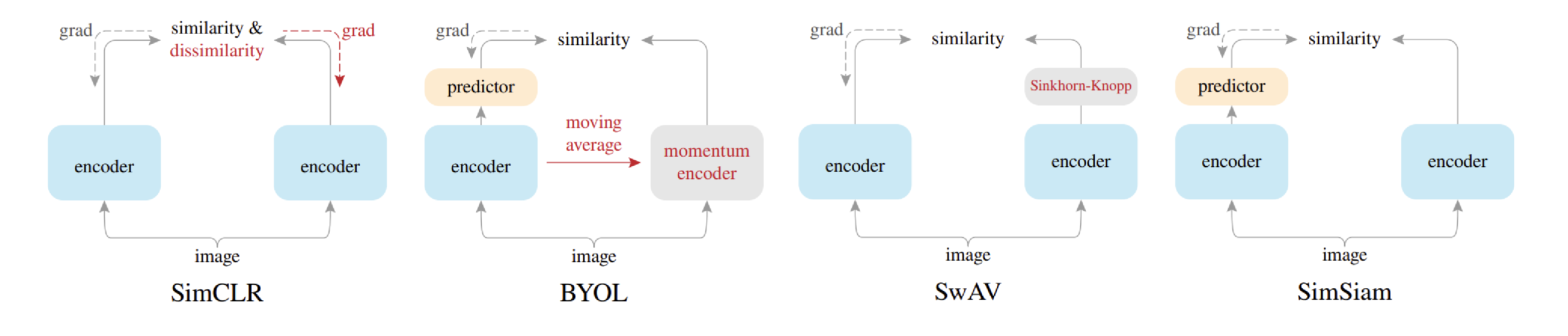

In contrastive learning, data augmentation is important to generate different views. Self-supervised learning has been shown to be very effective in learning useful representations, and yet much of the success is achieved in data types such. Abstract: Dimensionality reduction (DR) is used to explore high-dimensional data in many applications. This setup where you have features and labels is referred to as supervised learning. In the existing literature on self-supervised learning for tabular data, contrastive learning is the predominant method. While tree-based methods outperform DL-based methods in supervised learning, recent literature reports that self-supervised learning with Transformer-based models outperforms tree-based methods. Download a PDF of the paper titled Rethinking Data Augmentation for Tabular Data in Deep Learning, by Soma Onishi and Shoya Meguro Download PDF Abstract:Tabular data is the most widely used data format in machine learning (ML).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed